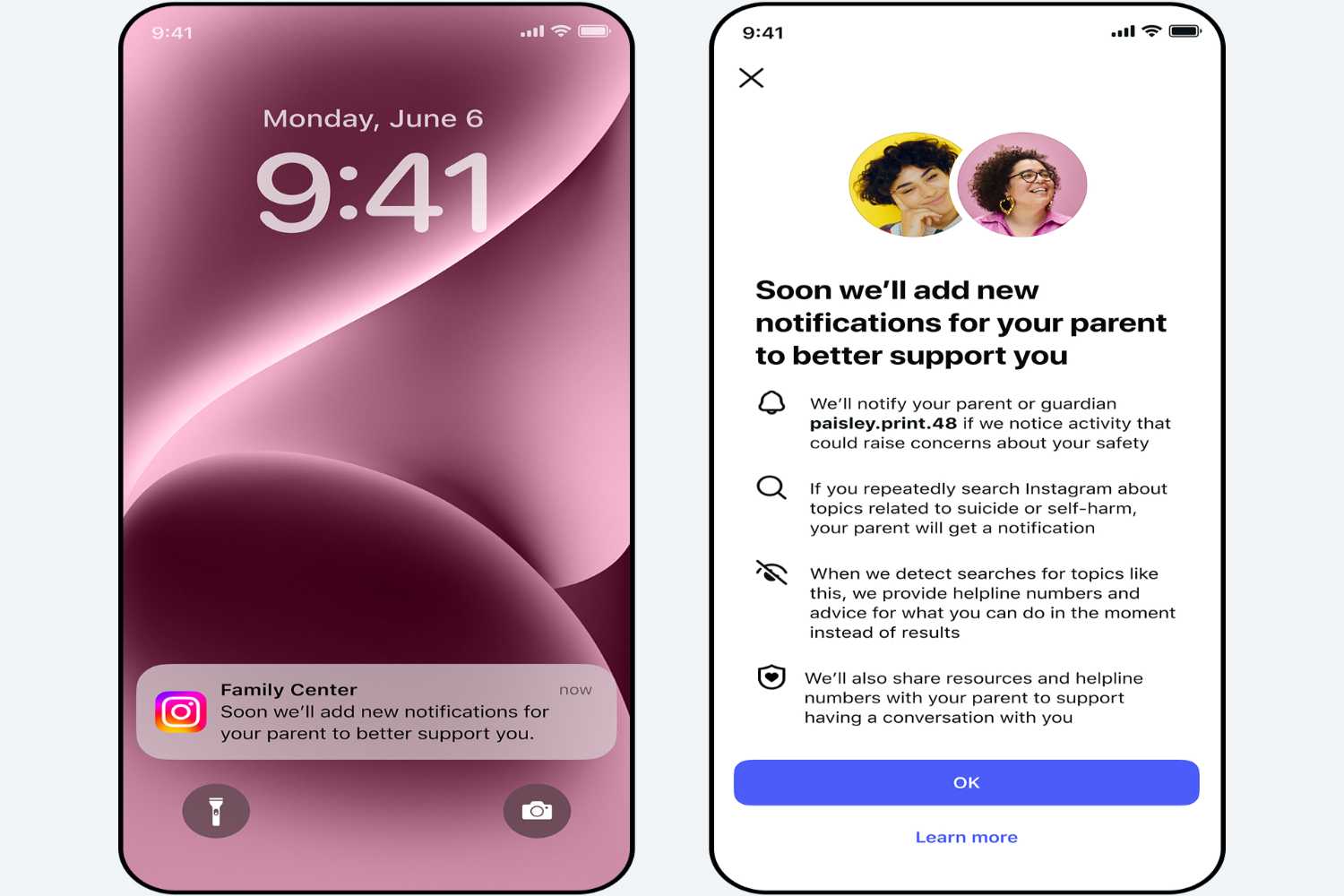

Meta announced that Instagram will begin notifying parents when teenage children will carry out repeated searches on topics such as suicide or self-harm in a short period of time. A message that can arrive via app, text message or even WhatsApp. Not a simple technical notice, but a alarm signal on a very delicate and more current topic than ever.

The news concerns accounts for teenagers connected to an active supervision system. Only adults who have chosen to activate the control will receive the alert. This is not generalized monitoring, but a targeted option designed to intercept situations potentially critical.

How the new protection works

The mechanism is activated when a minor frequently uses the search function for content related to self-harm. In that case, in addition to automatic blocking of sensitive results and redirection to support resourcesa notification will be sent directly to the parent’s account.

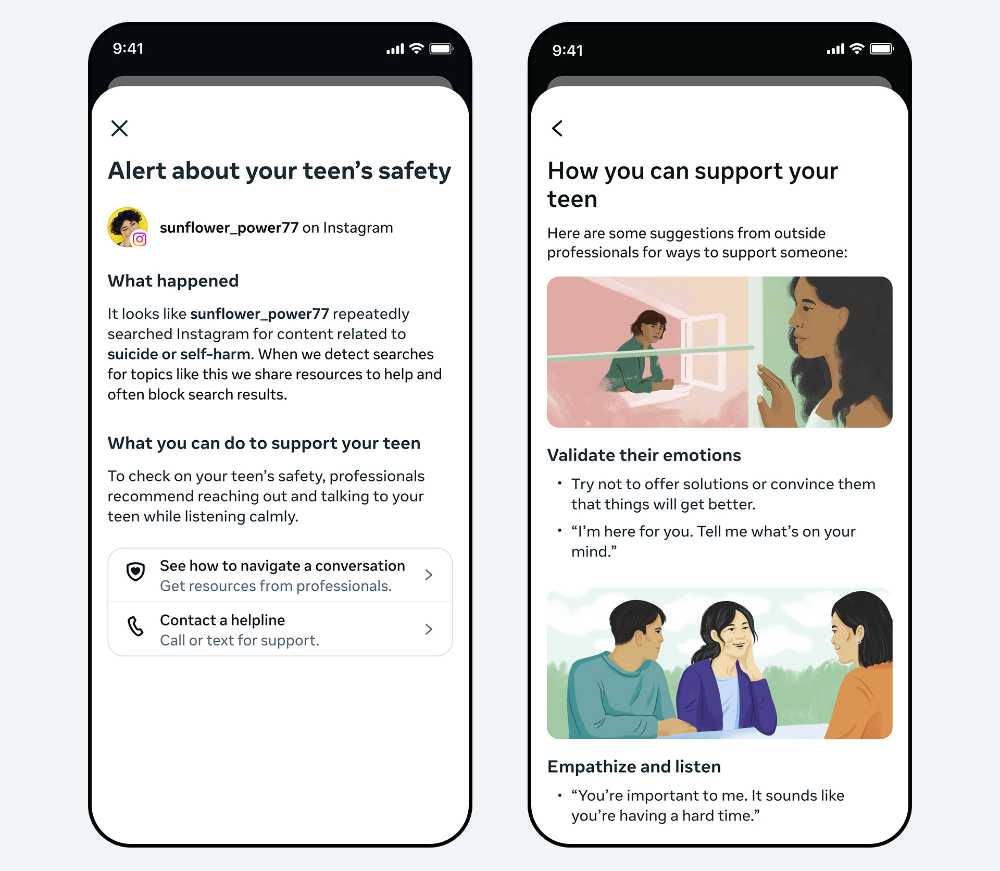

The alert doesn’t just report behavior. He will be accompanied by information materials developed with the contribution of expertsdesigned to help adults face difficult conversations with the children. The stated objective is to shift attention from simple surveillance to conscious prevention. The system will initially launch in United States, United Kingdom, Australia and Canada. Other countries, including France and Spainare preparing to adopt similar measures, while extension to other areas is expected in the coming months.

From search to chatbots: the perimeter is widening

The news won’t stop at photo or Reel searches. In the coming months notifications will also involve interactions with Meta AIthe chatbot integrated into the platform’s ecosystem. A choice that reflects a now evident reality: many teenagers, when looking for intimate or painful answers, turn precisely to conversational artificial intelligences.

The topic is delicate. According to an investigation reported by Reuters, Meta’s internal research has shown that among adolescents regularly exposed to content that generates discomfort regarding one’s body, the presence of eating disorders. A fact that reinforces the need for more incisive protection tools.

Between security and digital responsibility

The new function is part of a broader path to protect younger users. Instagram already limits access to harmful content and redirects to help lines, but with this step it introduces an additional element: the family co-responsibility.

The debate on the boundary between protection and privacy remains open. However, the message is clear: faced with repeated signs of distress, the platform he chose not to remain neutral. In a digital environment where searches can be silent and invisible, a simple alert could turn into a life saved.