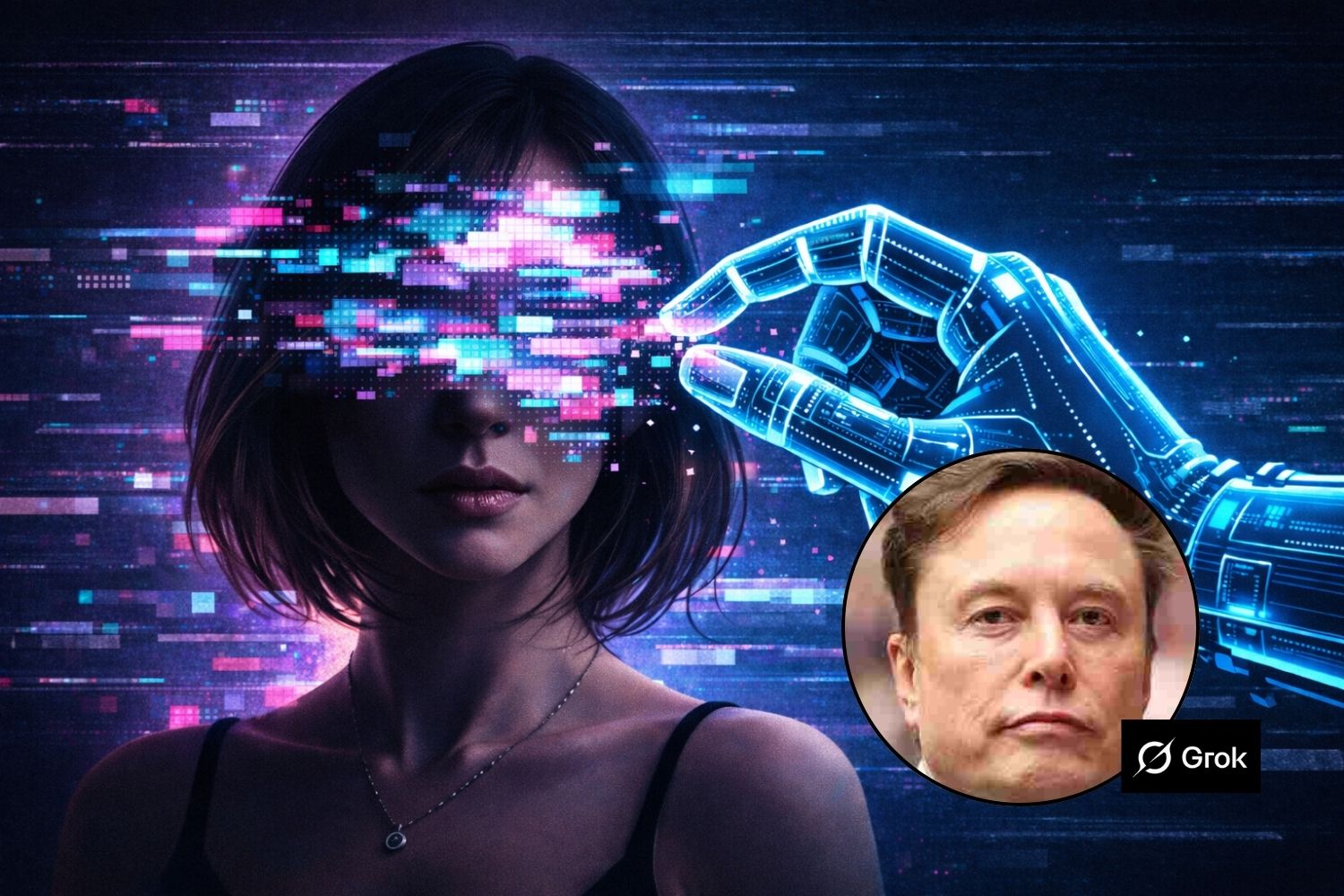

He allowed “the creation of sexually explicit images” and, obviously, without their consent. So three American girls dragged to court xAIElon Musk’s artificial intelligence company, blaming the chatbot Grok of having generated pornographic images starting from some of their real photos.

A lawsuit that comes after Brussels launched a formal investigation in recent weeks and which in the States could involve over a thousand minor victims and which is connected to deepfake New Year’s Eve published onX which California is also investigating today.

xAI chose to profit from the sexual predation of real people, including children, despite knowing full well the consequences of creating such a dangerous product, said Vanessa Baehr-Jones, an attorney for the plaintiffs.

What happened

The teenagers discovered that content manipulated with artificial intelligence – including deepfake images and videos – were circulating online, uploaded to Discord servers and subsequently shared on other platforms, such as Telegram.

In one case, one of the girls received an anonymous message on Instagram alerting her to the presence of content portraying her naked and in sexualized poses, together with other peers. Some images were allegedly generated from photos taken when he was still a minor, including shots from the school register.

According to what is reported in the complaint, these materials were also used as “bargaining goods” in illegal circuits to obtain other child pornography material.

After reporting to the authorities, the police arrested a suspect, finding illegal content on his phone that would have been generated using tools based on xAI technology.

The lawsuit alleges that the material was created through third-party applications that use Grok under official licenses. Even if not directly produced on the X platform, the system would still rely on the servers of xAI, which would profit from the diffusion of its technology.

Lawyers accuse the company of having effectively passed the responsibility onto external developers, without adequate controls on the use of the tools.

A global (and growing) problem

The case fits into a broader context of investigations and lawsuits against xAI for generating non-consensual sexualized images. According to some analyses, Grok produced millions of images of this type in a few weeks, including thousands involving minors.

Elon Musk has previously denied that the system has been used to create illegal content, claiming he was unaware of images of minors generated by the platform and saying Grok was designed to comply with local laws.

This case cannot fail to turn the spotlight back on an increasingly urgent issue: the uncontrolled use of artificial intelligence in image manipulation and the lack of effective protection, especially when the youngest are affected.